The joy of working with legacy code

Most programmers dislike working on legacy code. It often feels like finding your way through a swamp were every step could be fatal. The swamp in this case is a vast collection of lengthy functions with a confusing structure, written in an obscure language, with mystical variable names, poor documentation, ancient instrumentation. And if there are any tests at all, they certainly have insufficient coverage. Reviving legacy code is an art in itself. An art that, once mastered, is extremely valuable and that can bring a lot of joy and satisfaction.

At VORtech we mostly work on extending and improving existing code. So, we’ve seen all the daunting quirks that are typical for legacy code. We are proud of our capability to reanimate such code. Let us explain why this is so important and how it works.

The cost of legacy code

Why spend your budget on improving code quality, while (hopefully) getting the same functionality as before? A very important reason is Total Cost of Ownership (TCO): the effort of improving poor-quality code is an investment that can bring huge returns in terms of

reduced effort at further development and maintenance,

reduced risk of undetected errors,

reduced risk that the continuing with the code becomes untenable if the original compiler or operating system is no longer available.

An often-used metaphor is “Technical Debt”, which equates the extra time spent on maintaining legacy code to paying interest on a loan. When the interest cost starts consuming a significant part of the budget, it makes sense to invest in reducing the debt.

Confusing code

Typical of legacy code are files and functions with thousands of lines, with multiple branches (if – else if – else) and deep nested loops, jumps (go to) and early exits and returns. Such constructs make the code something like a pinball machine where the developer can be kicked in any odd direction at any time. The typical indistinct or even misleading function names and variable names without vowels certainly don’t help to find your way. Adding to the confusion are obsolescent language constructs, such as COMMON blocks in FORTRAN or complex pointer arithmetic and implicit type casting in C.

How has the code base evolved into such a state, despite the best intentions and professional skills of the developers? This is most often due to the long history of the code. The code was written with a specific context and for a specific purpose in mind. Over time, the context shifted, new applications and features were added to the code base and it outgrew its original playground. And maybe a bit too often some issue had to be fixed in a short time frame and the quick shortcut or workaround seemed the best choice.

The lean machine grew into a capricious, many-headed monster that no one dares touching or poking anymore. At the same time, the value of the application has grown along, because of the versatility and of the well validated and reliable results that it produces. The users have grown experienced with the program and they know what to expect.

How to fix legacy code

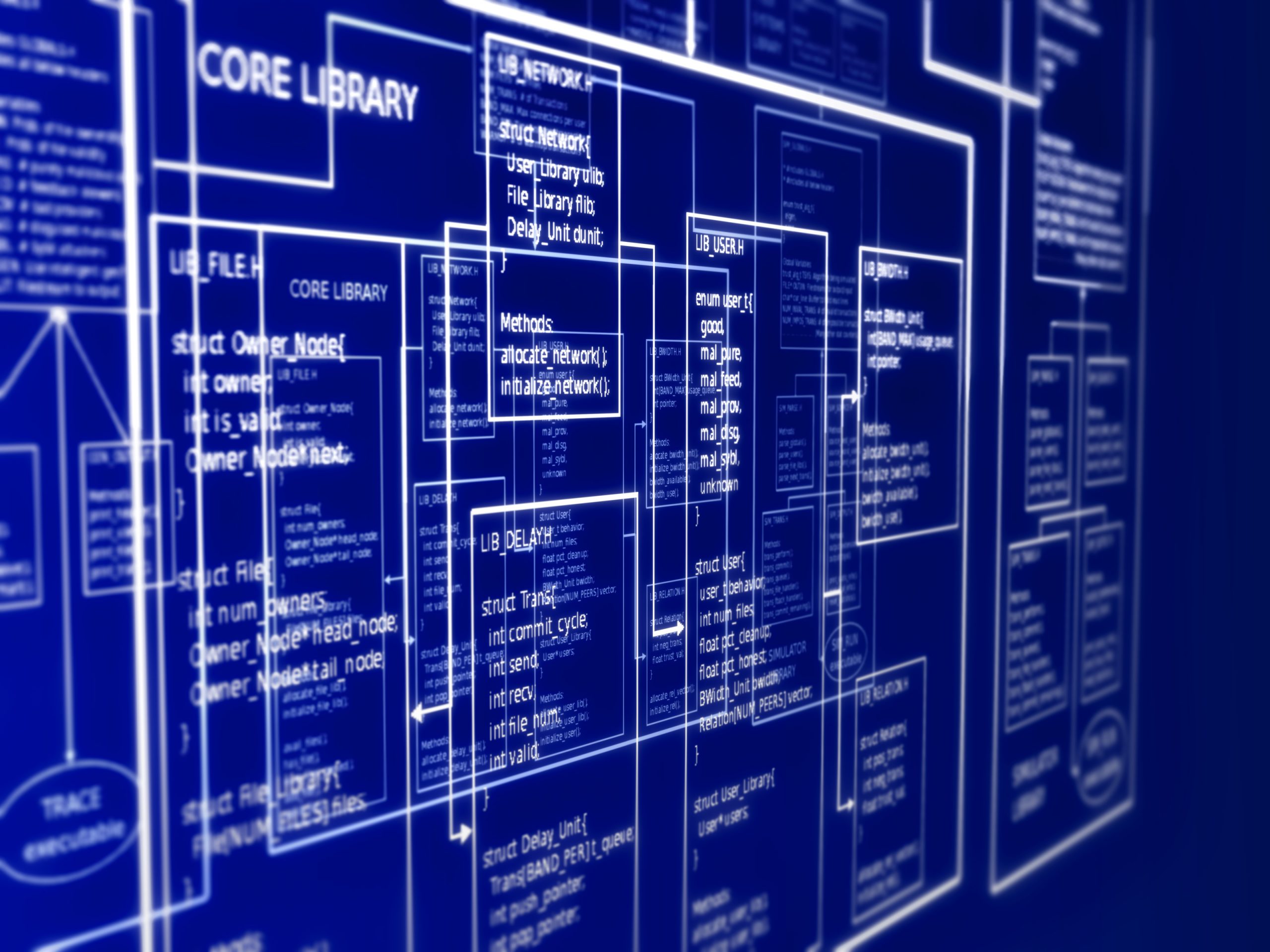

Fixing legacy code can be done by systematically or gradually refactoring functions and reorganizing the code, reverse engineering what the meaning might have been. By replacing variable names with meaningful names. By constructing meaningful units like functions, modules or classes. By writing comments and other documentation. And then, suddenly, it will all fall into place.

Let’s look at a few specific steps in this process.

How to replace global variables

High on the list of legacy code horrors is the global variable. What is a global variable? A global variable is a variable that can be modified at any place in the code. Hence, such a variable may change at any moment and then produce the most surprising changes in the rest of the program flow. It would be beautiful if it were not so malign. To make things even more complex, global variables can be hidden, shadowed, equivalenced or worse.

When writing quick-and-dirty code, global variables are extremely comfortable to use. Therefore, many programmers could not (and still can’t) resist the temptation to use them. To get them under control, replace them by local variables and pass values through argument lists. This not only removes the global variable, but also brings out the meaning of functions and subroutines that were modifying that variable. And even better, the variables can be clustered into meaningful objects

Of course, it also solves several other problems caused by the global variable, for instance race conditions when running the code in a multi-threaded fashion.

How to set up a test environment

Another barrier to work on legacy code is the lack of tests. Without tests, you can never tell whether any code change has unexpected side effects. Working on code without tests is like driving at high speed into a dense fog. However, the risk can be reduced by the right approach.

The first thing to do in this situation is to ask the customer for as many example cases as possible. Out of these examples, a more or less complementary set of cases is selected. These tests then form a test environment, which forms a rudimentary basis for regression testing. After any code changes, these tests are run, and their results compared against the results the original code produced.

The next step is to develop additional tests when significant program flows are found that are not covered by the current test bench. And to make unit tests for new code. As this goes along, the test coverage grows, and errors are found ever more quickly. Often, existing, undetected problems are found. It is great to find and fix such an error, especially if it is a nasty one.

Conclusion

For VORtech, our ultimate reward for working on legacy code is bringing value to our customers. Through our work they can move forward with their existing, stable code base for many years to come. And we prevent them from having to declare end-of-life and do a rewrite from scratch at the cost of high risks and expenses.

A more extensive discussion of our approach to legacy code can be found in the blog series by Koos Huijssen.